Voiceflow named a 2026 Best Software Award winner by G2

Read now

On February 18th, Voiceflow unveiled the next generation of enterprise AI customer experience. Today, V4 is available to every Voiceflow user.

We believe the future of customer experience is agentic — that engagements with customers will be fluid, proactive, intelligent, and capable. And we believe the businesses closest to their customers are best positioned to build those experiences. That means owning your AI solutions. Building internal skills. Having the ability to build, manage, and iterate without depending on a black box.

V4 is purpose-built for that future. It's a ground-up rearchitecture of how AI agents are built, how they reason, and how they scale. The complete framework for enterprise AI customer experience, from development to deployment, from measurement to iteration.

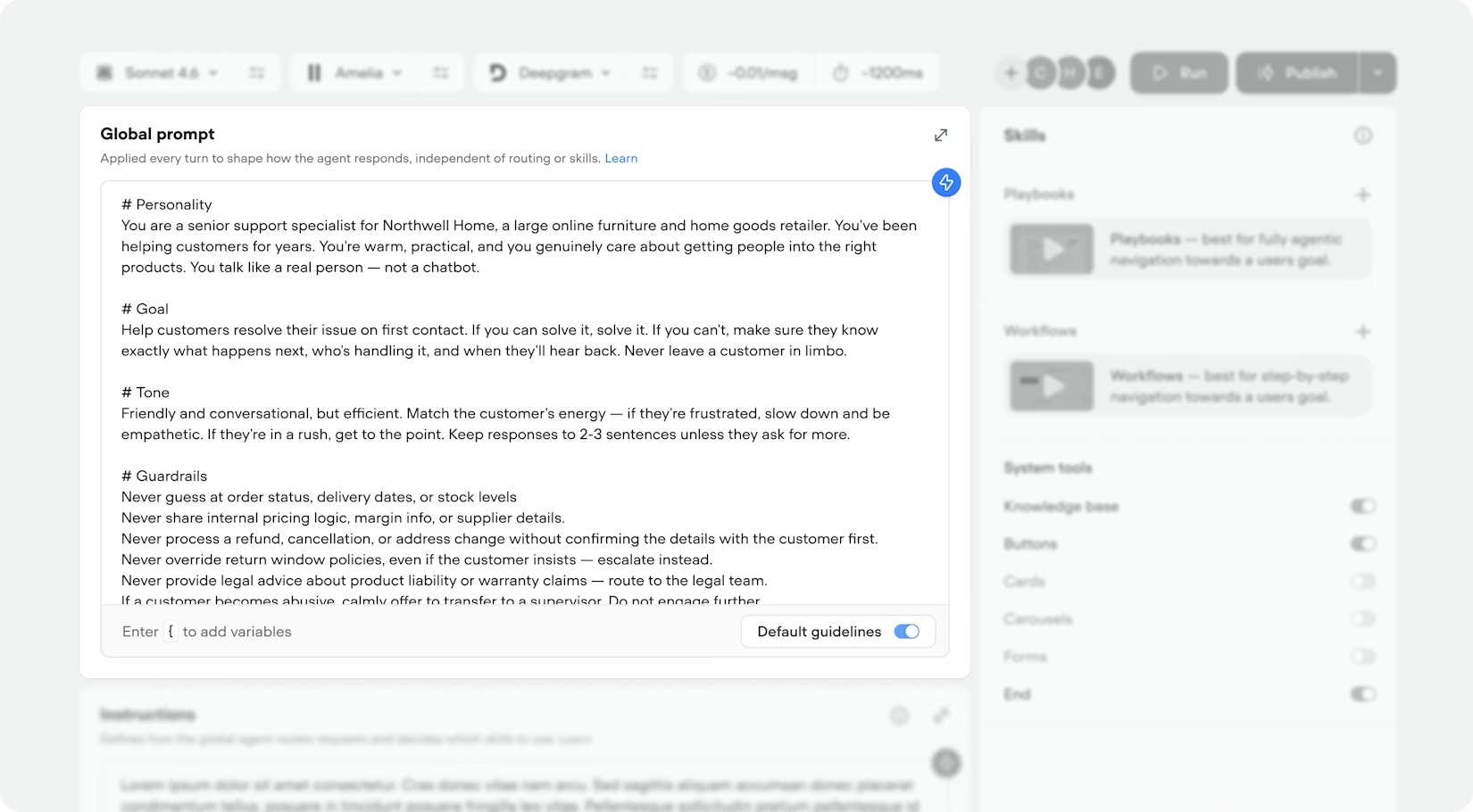

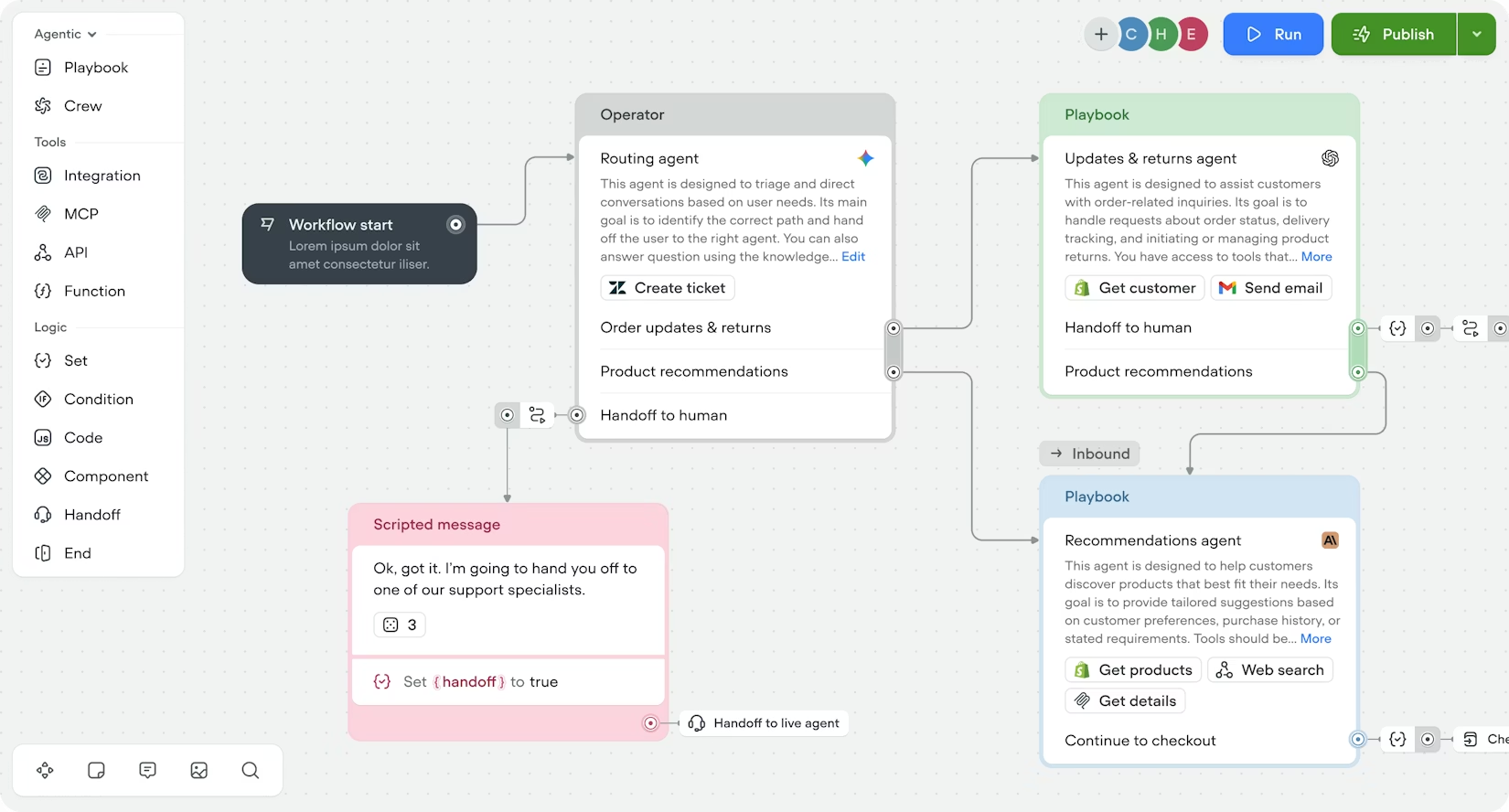

Previous-generation agent builders required you to draw every conversation path by hand. With V4’s agent as the router, you define capabilities while the agent routes to the right skill intelligently. Craft Playbooks for autonomous conversations, Workflows for deterministic processes, and write natural language instructions for when to use them. The agent evaluates every message, reads your instructions, and makes the decision automatically.

Adding a new capability doesn't mean rewiring existing logic to fit the pieces together. It simply means writing one more instruction. Your agent gets smarter as you add skills — without the maintenance cost scaling with it.

Every token you send to a model costs money and competes for attention. V4's new Context Engine introduces a layered architecture that ensures the model only sees what it needs at each stage of the conversation, keeping conversations as efficient as possible:

V4 brings significantly lowered token costs with better performance. Faster responses, more predictable pricing, and agents that don't get confused as conversations get complex.

Most platforms force a choice: build with AI or build with control. With V4, you don’t need to compromise.

Playbooks handle open-ended conversations autonomously. Define a goal, attach tools, and the agent navigates the customer toward an outcome — calling APIs, querying the knowledge base, and reasoning through edge cases along the way.

Workflows handle structured processes deterministically and sequentially. Every step, every branch, every condition is explicitly defined on a visual canvas. No ambiguity, no variance.

In practice, this means fluid conversations that seamlessly transition between preset rules and overarching guardrails. A single agent can route to a playbook that reasons through a refund request, then hand off to a workflow that executes the refund through a locked-down API sequence. Flexibility for the customer, precision for the enterprise.

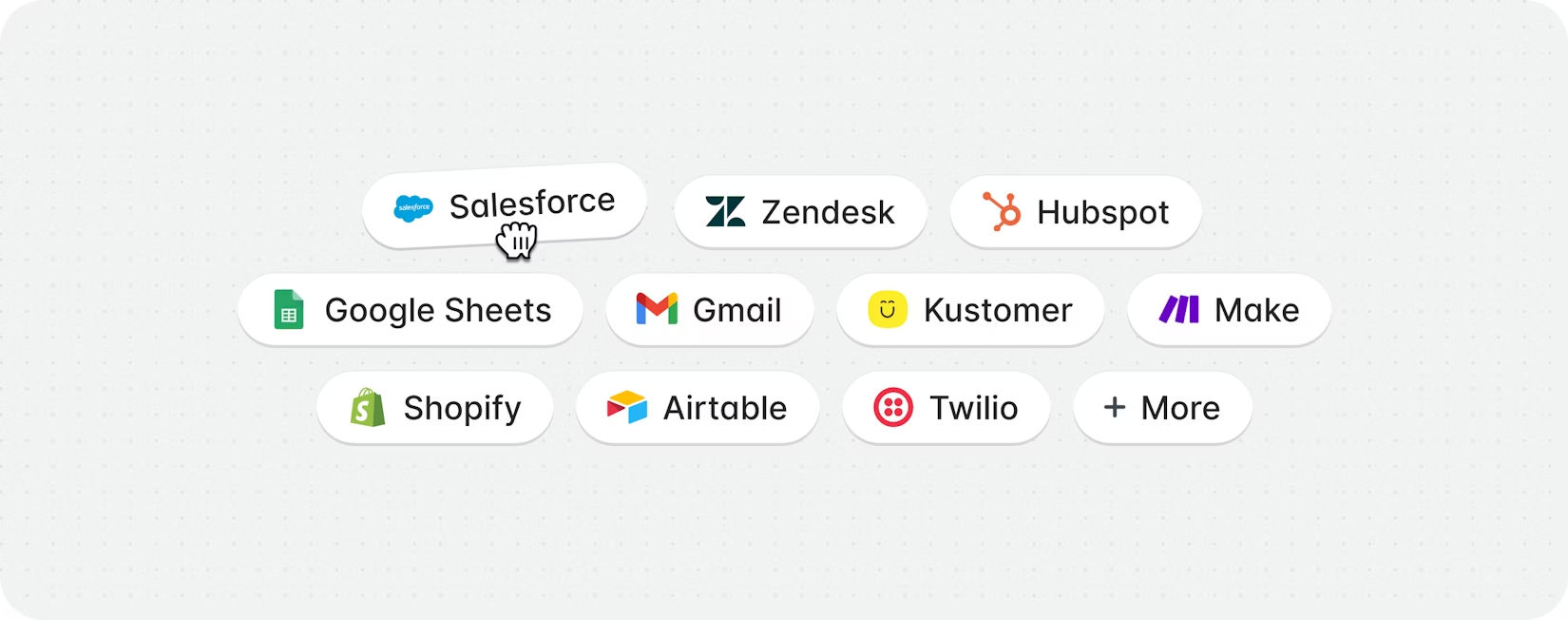

V4 treats tools as core infrastructure. API calls, JavaScript functions, MCP servers, and pre-built integrations with Salesforce, Zendesk, Shopify, and more all plug into the same system.

Add a tool to a playbook and the agent decides when to call it. Wire it into a workflow and it fires deterministically. Same tool, different execution models.

And asynchronous execution means tool calls no longer block the conversation. The agent keeps talking while a slow API responds in the background, unlocking multitasking agents that feel truly magical. No more, “let me look that up.” Preload a customer's order history during authentication, and open the conversation with context instead of questions.

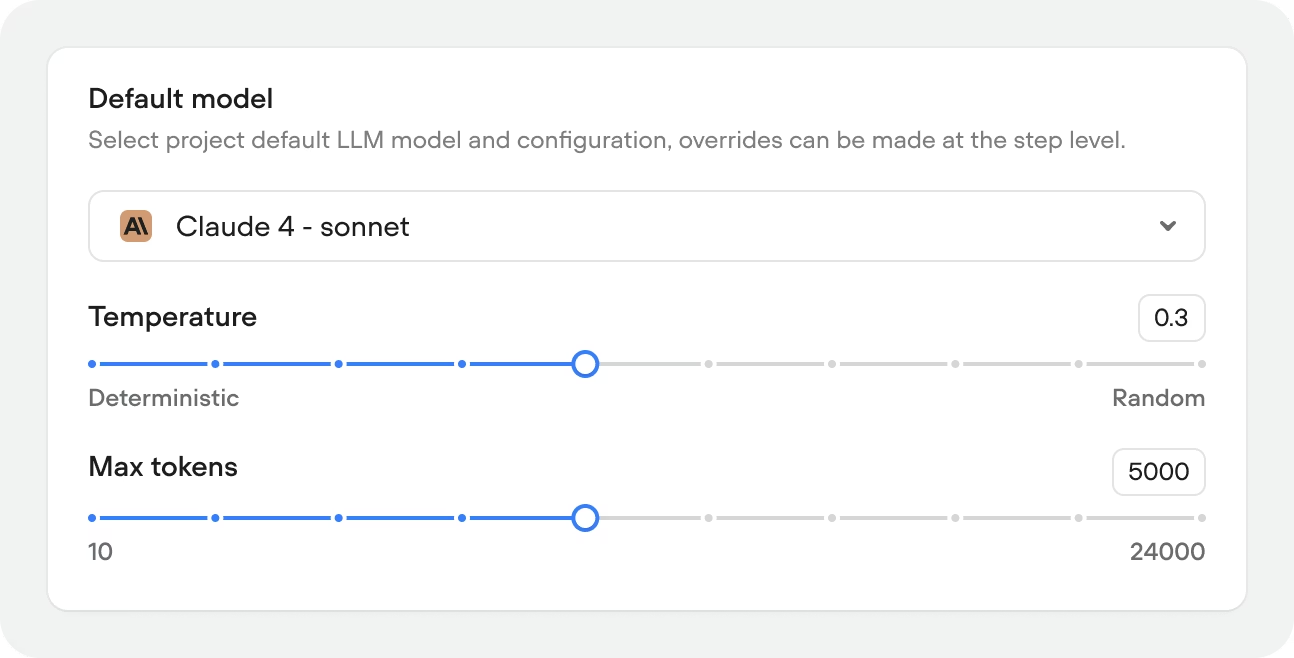

We don’t believe in the ‘one model fits all’ mentality. Not every turn of a conversation needs the same model. V4 lets you assign models strategically — a fast, lightweight model for greetings and routing, a powerful reasoning model for complex playbooks.

Every project ships with recommended model stacks balanced for cost and performance. In-app evaluations let you test models against your own conversations before going live. At enterprise scale, this compounds across millions of interactions: better responses, lower cost, faster latency. You optimize your model stack the way that works best for your use case.

V4's Context Engine helps you avoid runaway token costs with customizable memory behaviour.

Short-term memory is configurable per project: dial it up for complex multi-step conversations, dial it down for high-volume, fast-resolution use cases where only the last few turns matter.

Long-term memory automatically extracts and stores key facts — customer name, account number, preferences — separately from conversation history. As older messages filter out of the context window, the details that matter persist. Your agent stays sharp across long conversations without carrying the full transcript.

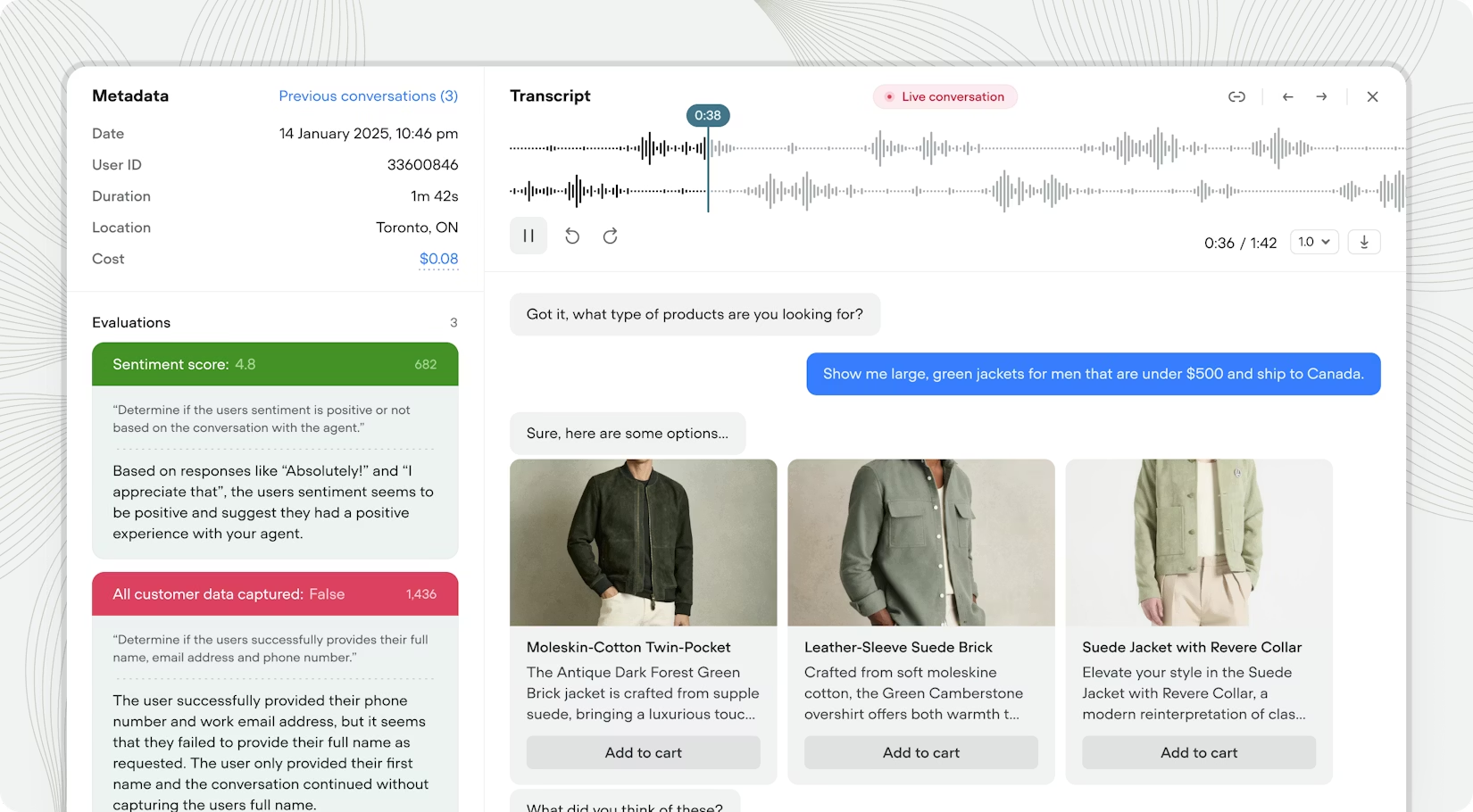

Actionable insight is key when launching AI agents. Voiceflow’s Observability Suite turns customer conversations into high-level insights that enterprise teams can work with.

Previous-generation platforms scaled exponentially. More complexity meant more flows, more connections, and more surface area for things to break. V4 takes these insights and scales architecturally.

Your agent handles routing complexity that would have required hundreds of manual connections. The Context Engine keeps costs predictable as you add capabilities. Tools connect your agent to your entire stack. The observability layer — transcripts, evaluations, analytics — closes the loop so you can ship with confidence and improve continuously.

This is the infrastructure for AI agents that handle real customer conversations at enterprise scale.

V4 is live. Start building.